Meridian.ai

Led design for an AI-native Revenue Cycle Management (RCM) platform to help healthcare providers prevent revenue leaks in real-time.

Role — Lead Designer

Company — Kyndryl

Client — MetLife

Duration — Jul 2025 - Dec 2025

Team — Insurance SMEs, Account Manager, Project Manager, 1 SWEs, 1 Cloud Architect

Problem

RCM teams were logging into more than 20 systems a week and manually stitching together dashboards from stale data. By the time they spotted a leak, it was usually too late to recover, and with margins under 1%, they couldn’t afford to miss anything.

Strategy

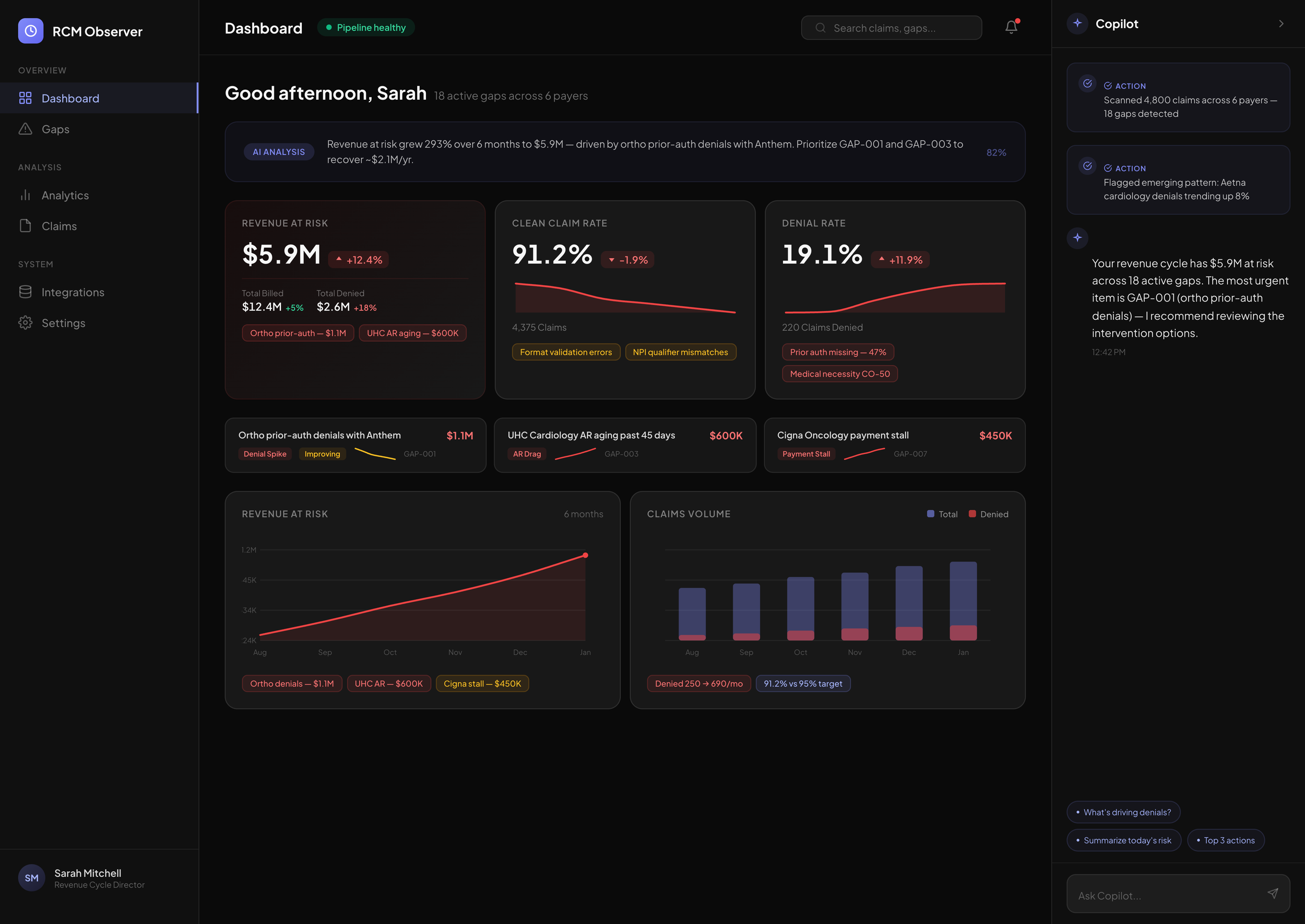

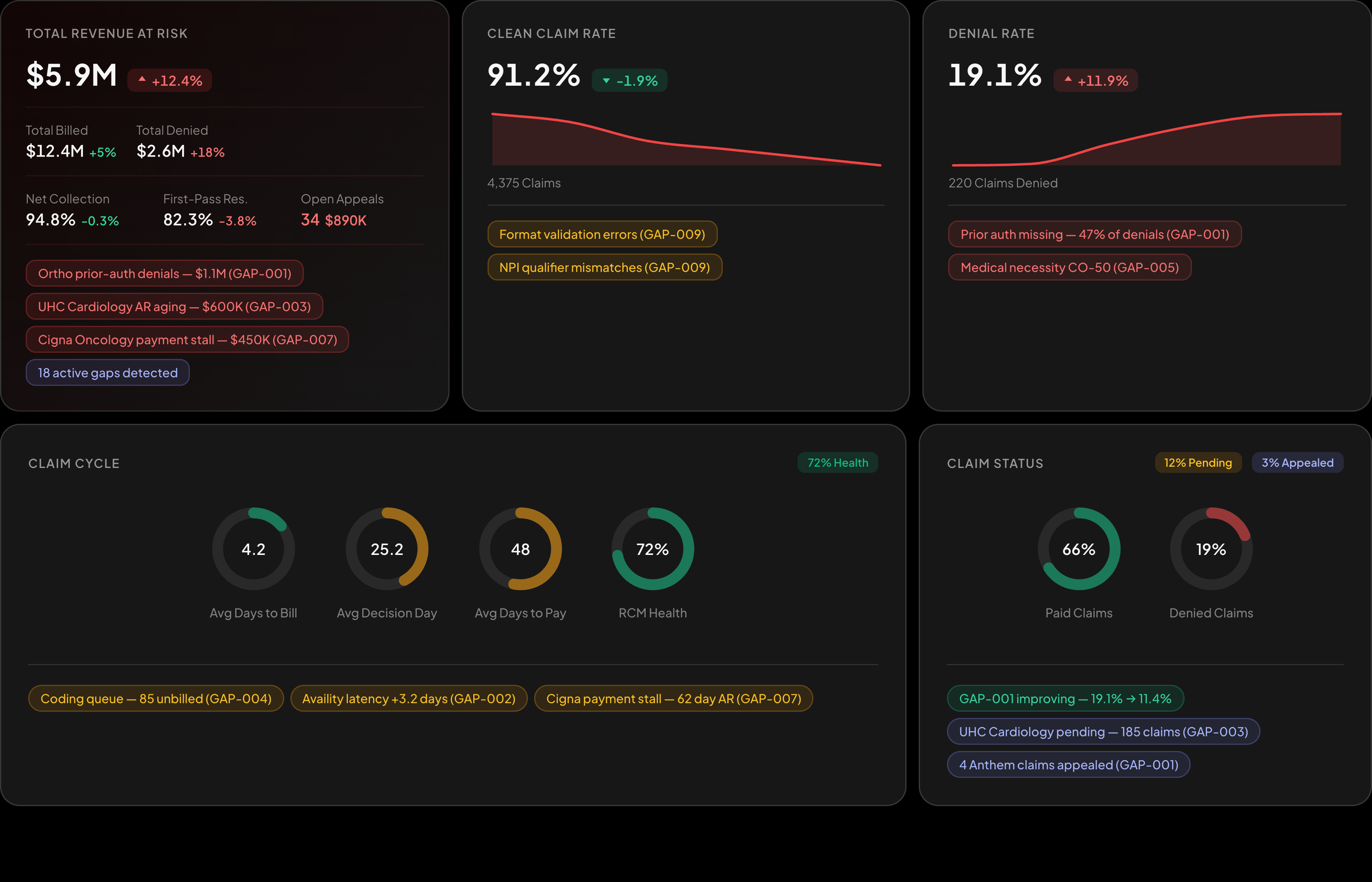

Research showed the real burden wasn’t the lack of dashboards—it was the constant mental overhead of hunting for problems across disconnected tools. I reframed the product around AI agents that watch streams of operational data, surface suspected revenue gaps, and guide users directly to root causes, so the experience starts with answers rather than raw metrics.

Outcomes

Manual reporting time dropped from about 12 hours per week to under 30 minutes.

Root‑cause detection moved from quarterly reviews to near real‑time monitoring.

Platform identified 2.4M USD in recoverable revenue in the first 90 days.

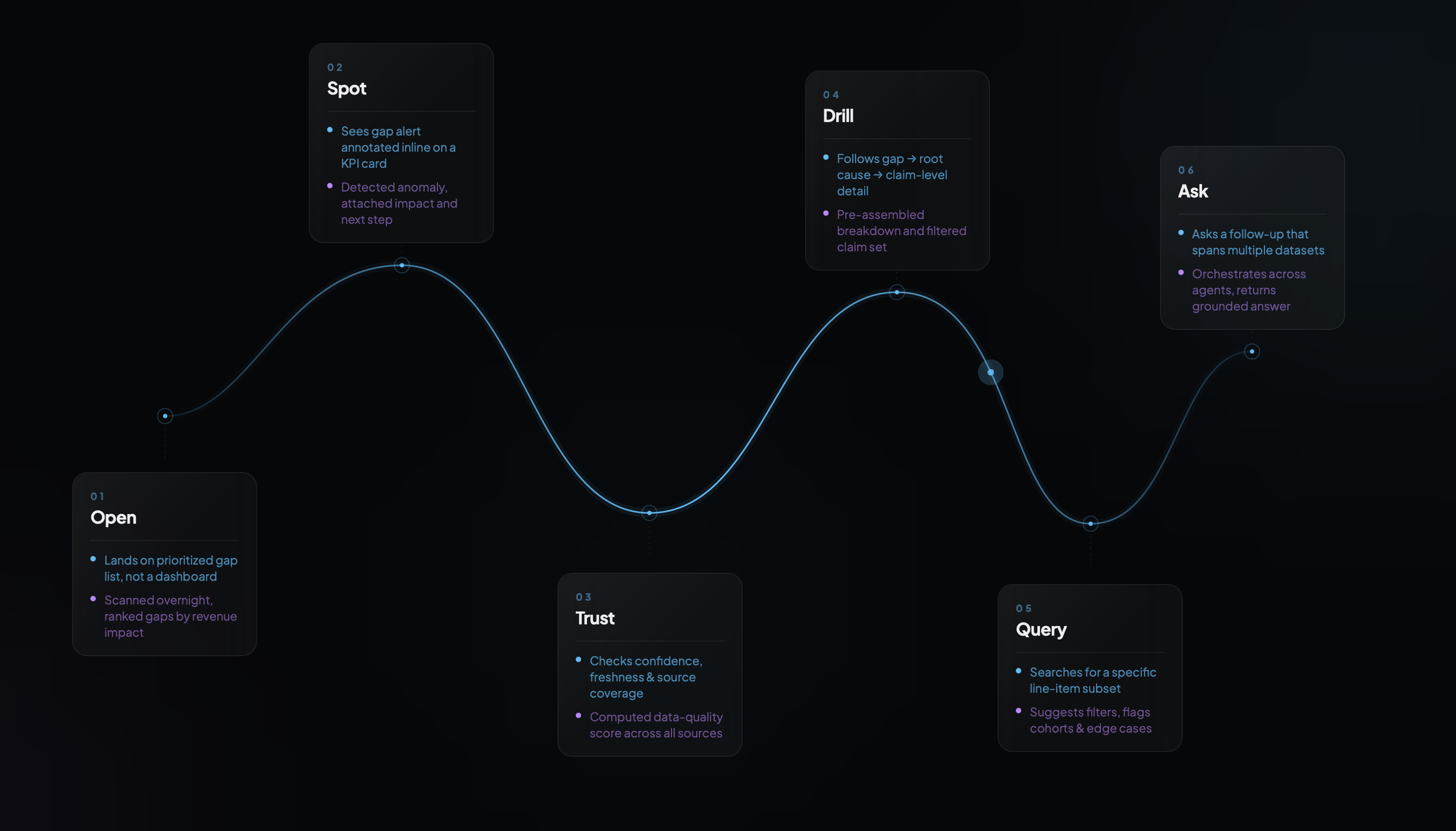

Journey

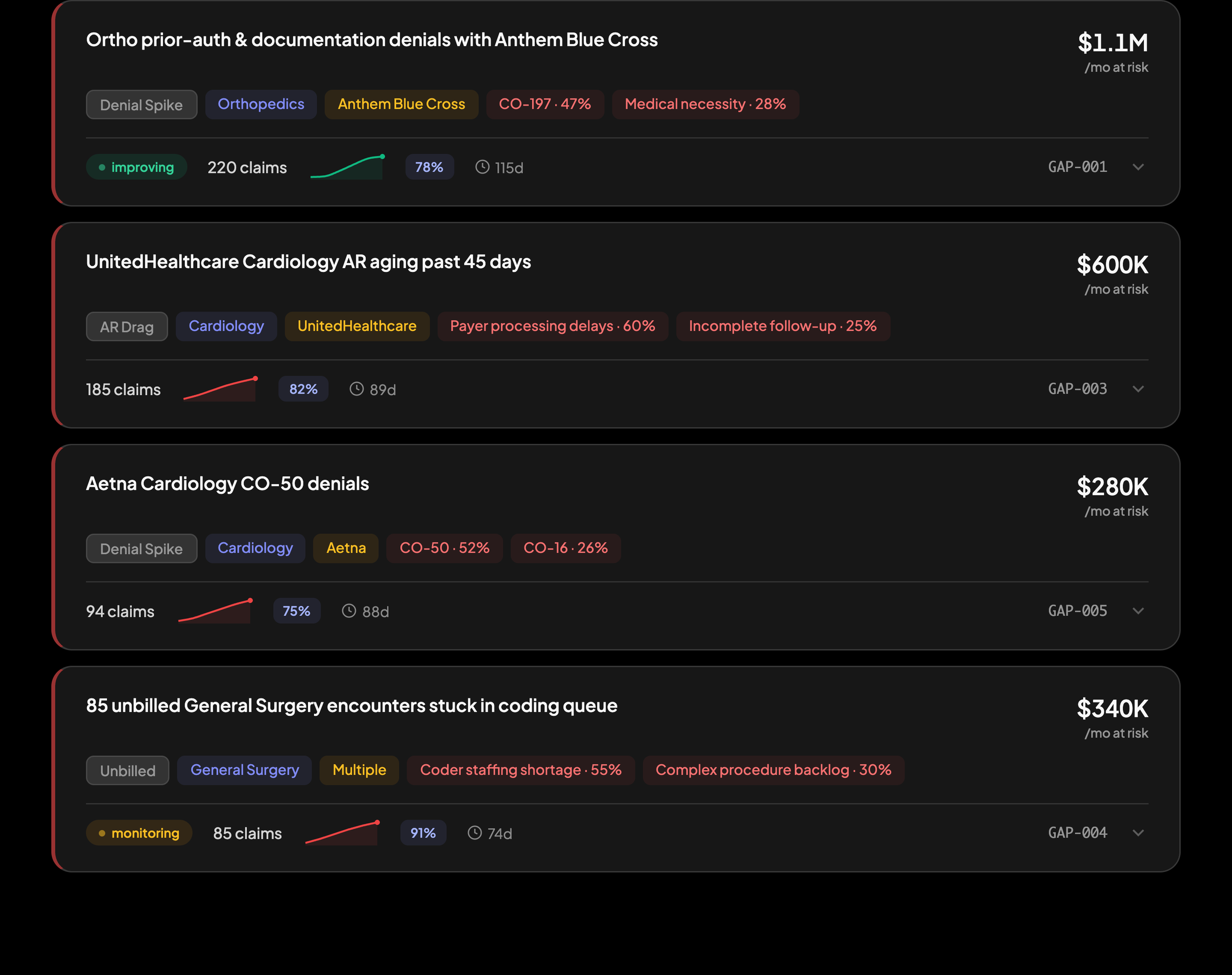

Users land on a prioritized list of revenue gaps, not a dashboard — each annotated with impact, confidence, and a clear next step. Behind every interaction, from spotting an anomaly to drilling into root causes to asking a cross-dataset follow-up, agents have already scanned, scored, and assembled the evidence so the user's job is judgment, not data assembly.

Insights need to live where attention lives

Early versions buried AI‑detected gaps in a side panel that users rarely opened; they scanned KPI tiles and moved on. I redesigned the KPI cards so each one has an embedded “gap” agent that annotates the metric in place with issues, impact, and next steps, putting insights exactly where attention already is.

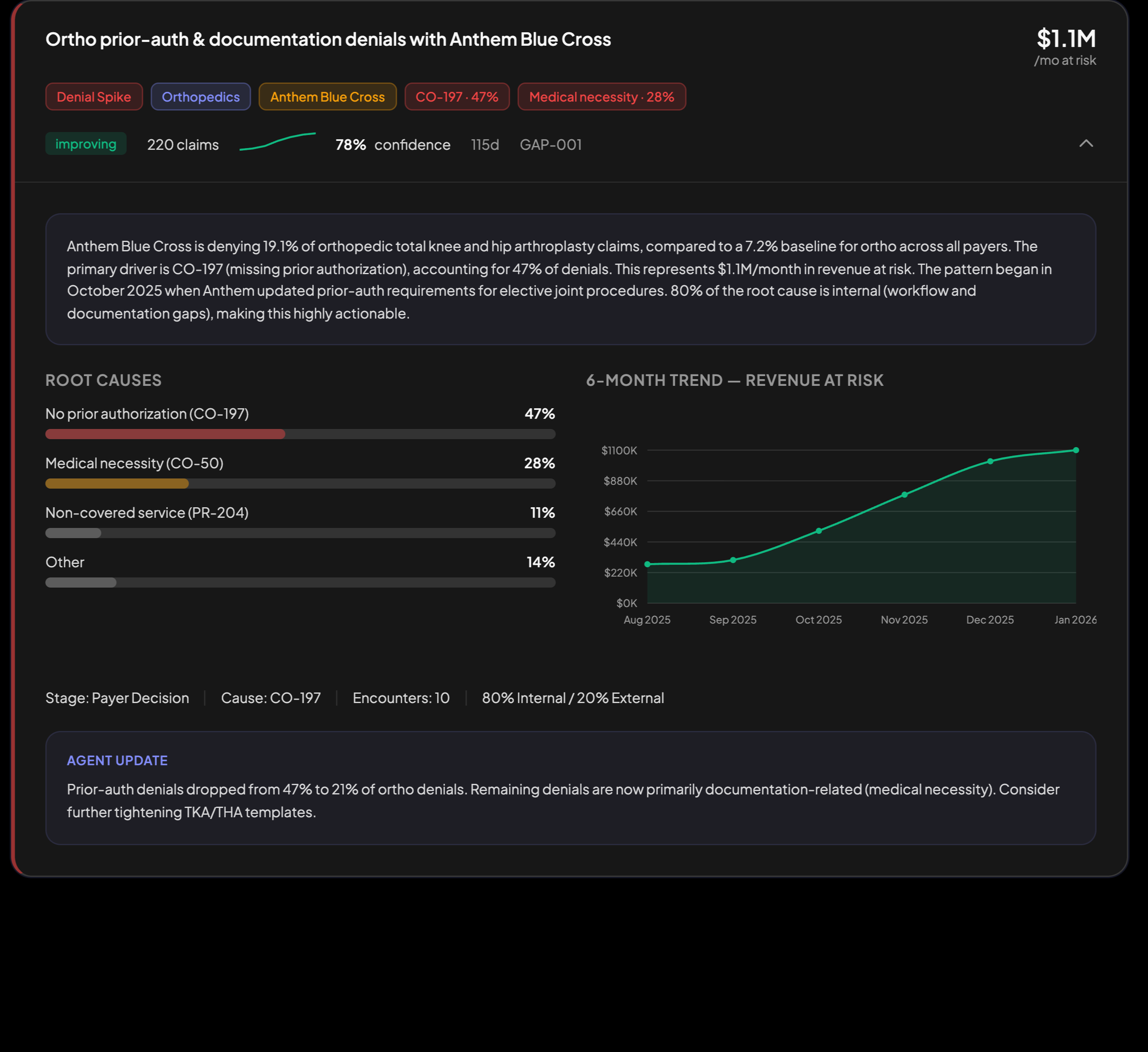

Root causes beat dashboards

CFOs and RCM leads told us they didn’t want to explore data; they wanted to know what to fix and who should own it. I restructured navigation so users land on a prioritized list of AI‑detected gaps, each representing a root‑cause cluster, and only then drill into supporting metrics as needed.

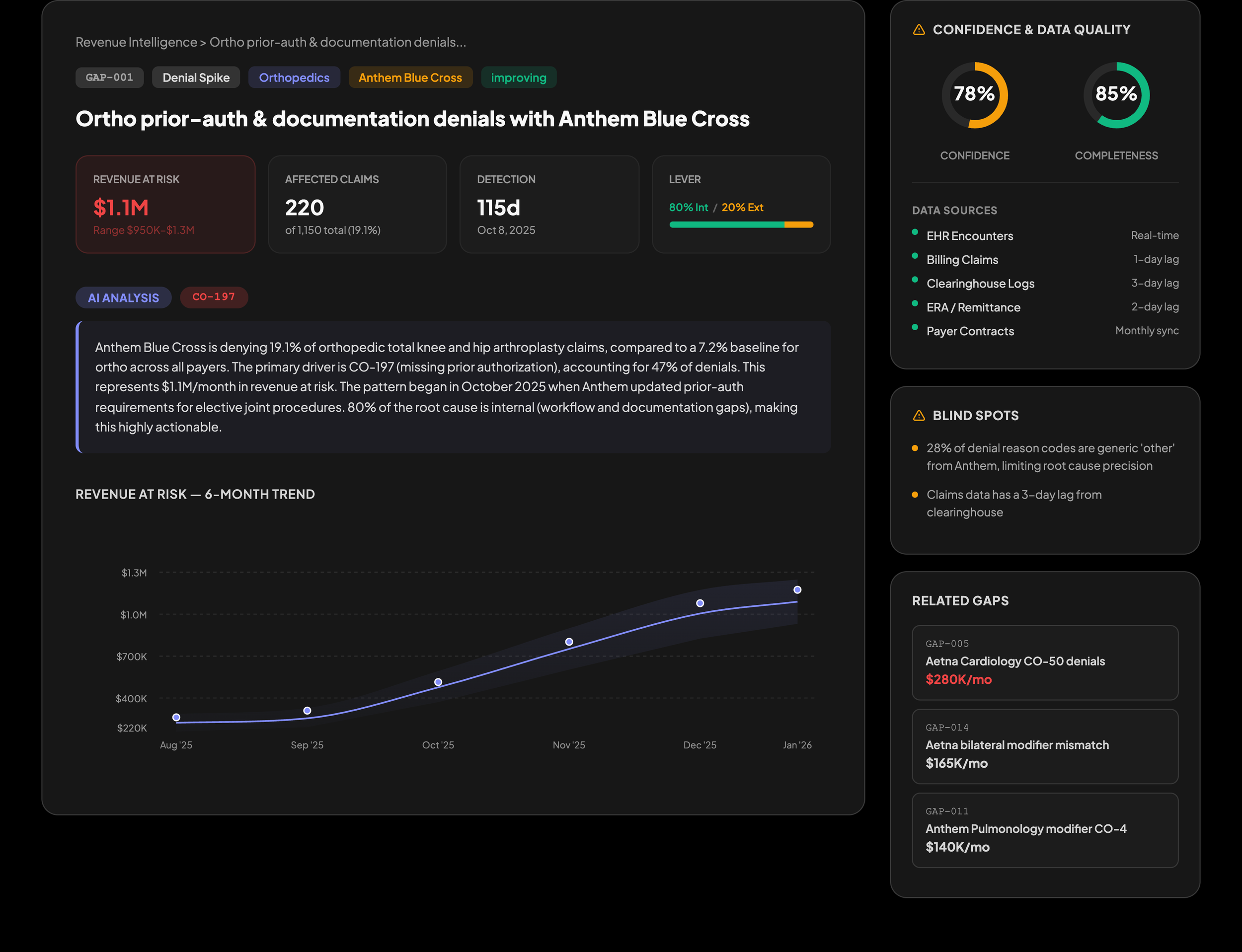

Dirty data requires visible confidence

Healthcare data is incomplete, delayed, and heavily interdependent, with claims taking more than 45 days to settle. I made every gap explanation carry visible confidence signals—data freshness, source coverage, and known blind spots—so users can quickly judge when to act and when to treat an insight as a directional prompt.

Investigation needs a path, not just data

When users clicked into a gap, they often stalled because they didn’t know how to proceed, even though the data was present. I designed a guided three‑step drilldown—Gap summary, Root‑cause breakdown, Individual claims—that mirrors how experienced analysts investigate, giving less technical users a clear path from “there’s a leak” to “here is the exact set of claims to fix.”

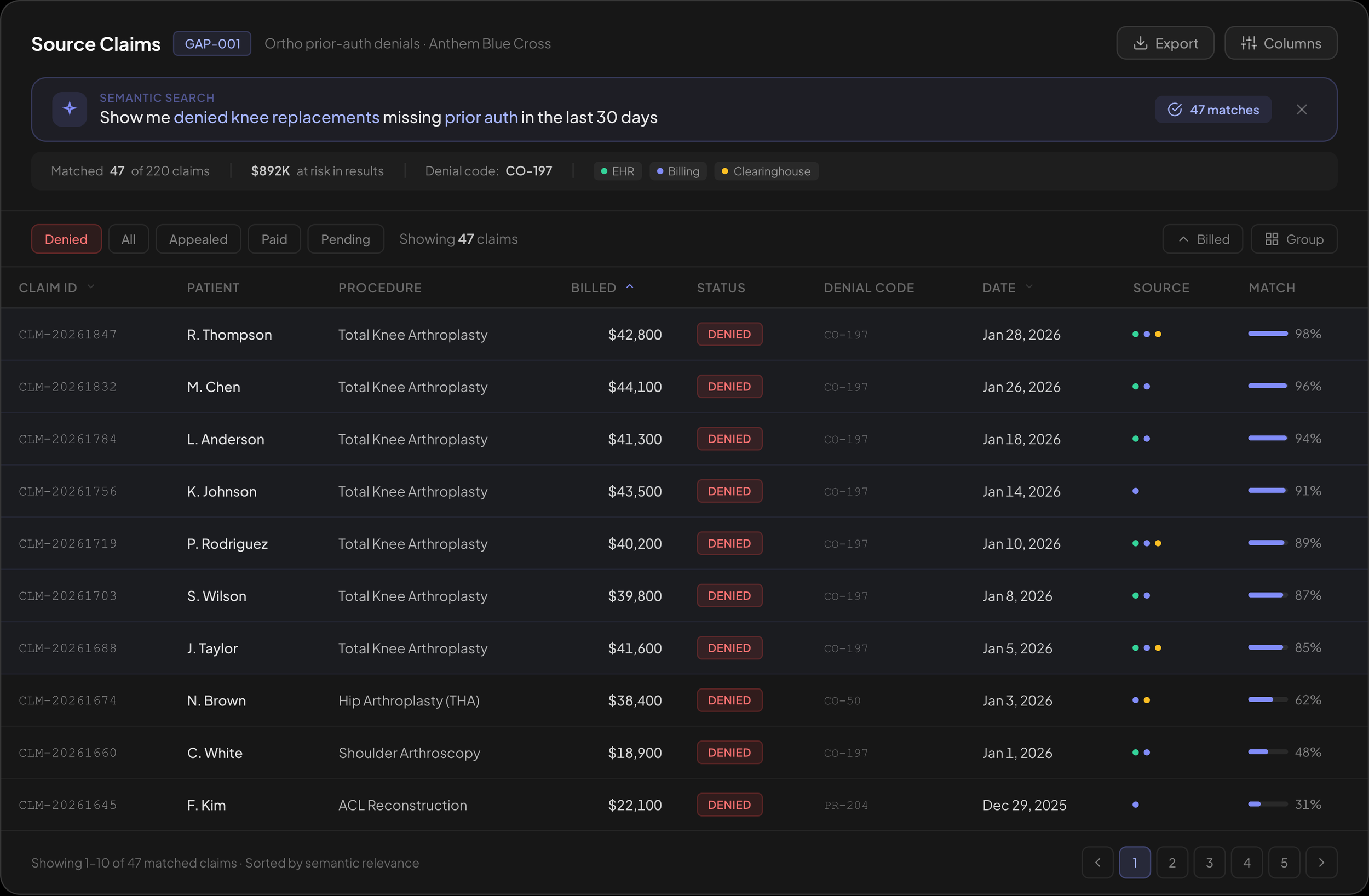

Queryable data

Users still needed direct control when chasing specific questions, like finding a subset of line items behind a pattern. I added semantic and field‑aware search over curated datasets so they can slice to exactly what they need while keeping AI in the loop to suggest filters, cohorts, and edge cases to examine.

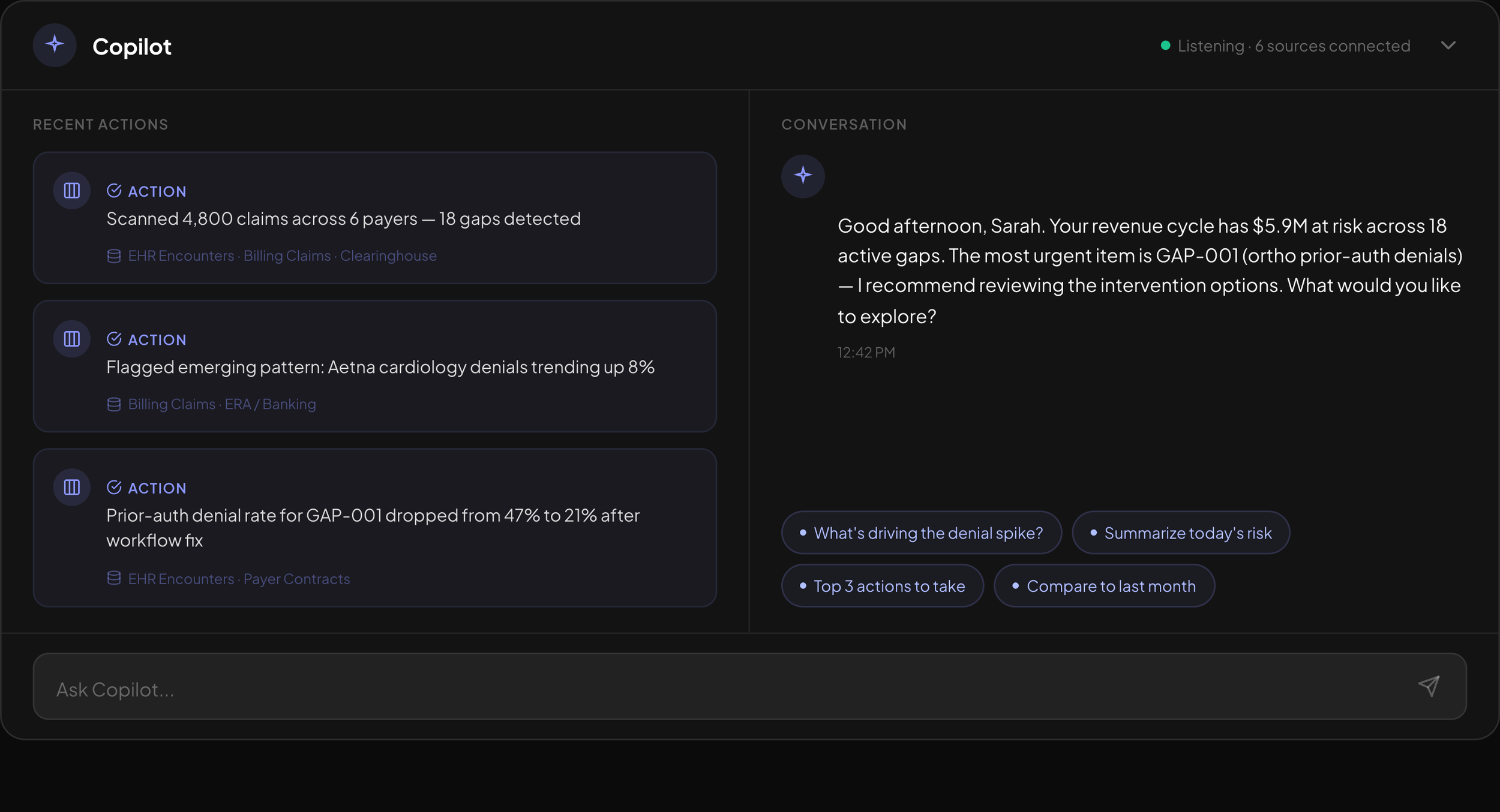

Conversation beats queries

Teams frequently had follow‑up questions that crossed datasets or combined multiple gaps into a single story. I designed a Q&A Copilot that starts with context‑specific suggestion pills instead of a blank chat box, then orchestrates queries across the underlying agents to answer questions in natural language without forcing users back into dashboards.